Large language models have recently enabled a prompting based, few-shot learning paradigm referred to as in-context learning (ICL). However, the ICL paradigm can be quite sensitive to small differences in the input prompt, such as the template of the prompt or the specific examples chosen. To handle this variability, we leverage the framework of influences to select which examples to use in a prompt. These so-called in-context influences directly quantify the performance gain/loss when including a specific example in the prompt, enabling improved and more stable ICL performance.

Prompting is a recent interface for interacting with general-purpose large language models (LLMs). Instead of fine-tuning an LLM on a specific task and dataset, users can describe their needs in natural language in the form of a “prompt” to guide the LLM towards all kinds of task. For example, LLMs have been asked to write computer programs, take standardized exams, or even come up with original Thanksgiving dinner recipes.

To elicit better performance, the research community has identified techniques for prompting LLMs. For example, providing detailed instructions or asking the model to think step-by-step can help direct it to make more accurate predictions. Another way to provide guidance is to give the model examples of input-output pairs before asking for a new prediction. In this work, we focus on this last approach to prompting often referred to as few-shot in-context learning (ICL).

Few-shot ICL

In-context learning involves providing the model with a small set of high-quality examples (few-shot) via a prompt, followed by generating predictions on new examples.

As an example, consider GPT-3, a general-purpose LLM that takes prompts as inputs. We can instruct GPT-3 to do sentiment analysis via the following prompt.

This prompt contains 3 examples of review-answer pairs for sentiment analysis (3-shot), which are the in-context examples.

We would like to classify a new review, My Biryani can be a tad spicier, as either positive or negative.

We append the input to the end of our in-context examples:

Review: The butter chicken is so creamy.

Answer: Positive

Review: Service is subpar.

Answer: Negative

Review: Love their happy hours

Answer: Positive

Review: My Biryani can be a tad spicier.

Answer:Negative

To classify the final sentence, we have given this prompt as input to GPT-3 to generate a completion. We simply consider the probability of the model generating the word Negative versus the word Positive, and use the higher-probability word (conditional on the examples) as the prediction.

In this case, it turns out that the model can correctly predict Negative as being a more likely completion than Positive.

Note that in this example, we were able to adapt the LLM to do sentiment analysis with no parameter updates to the model. In the fine-tuning paradigm, doing a similar task would often require more human-annotated data and extra training.

Perils of in-context learning

ICL allows general-purpose LLMs to adapt to new tasks without training. This lowers the sample complexity and the computational cost of repurposing the model. However, these benefits also come with drawbacks. In particular, the performance of ICL can be susceptible to various design decision when constructing prompts. For example, the natural language template used to format a prompt, the specific examples included in the prompt, and even the order of examples can all affect how well ICL performs [ACL tutorial].

In other words, ICL is brittle to small changes in the prompt. Consider the previous prompt that we gave to GPT-3, but suppose we instead swap the order of the first two examples:

Review: Service is subpar.

Answer: Negative

Review: The butter chicken is so creamy.

Answer: Positive

Review: Love their happy hours

Answer: Positive

Review: My Biryani can be a tad spicier.

Answer:Positive

When given this adjusted prompt, the model’s prediction changes and is now incorrectly predicting a positive sentiment! Why did this happen? It turns that in-context learning suffers from what is known as recency bias. Specifically, recent examples tend to have a larger impact on the model’s prediction. Since the model recently saw two positive examples, it spuriously followed this label pattern when making a new prediction. This behavior makes ICL unreliable—the performance should not be dependent on a random permutation of examples in the prompt!

In-context influences

To address this unreliability, we look towards a variety of methods that aim to quantify and understand how training data affects model performance. For example, Data Shapley values and influence functions both aim to measure how much an example affects performance when included in the training dataset. Inspired by these frameworks, our goal is to measure how much an in-context example affects ICL performance when included in the prompt. In particular, we will calculate the influence of each potential example on ICL performance, which we call in-context influences.

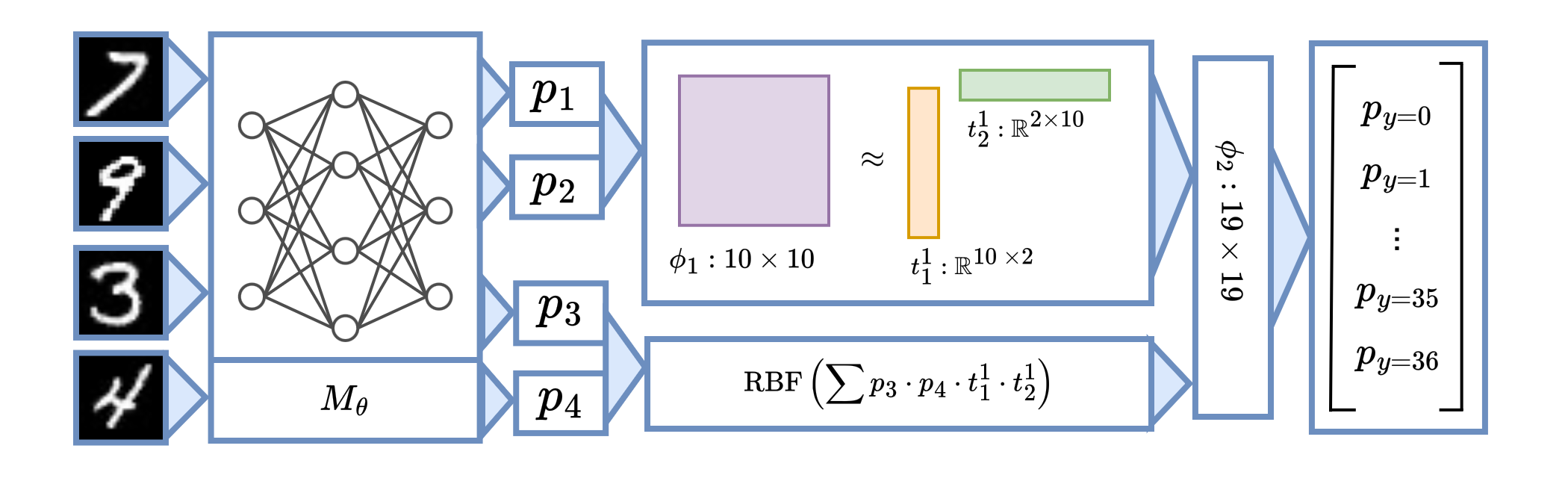

More formally, let \(S\) be a training set and \(f(S)\) be the validation performance after training on \(S\). We calculate in-context influences with a two-step process:

- Collect a “dataset” of \(M\) training (prompting) runs \(\mathcal D = \{(S_i, f(S_i)\}_{i=1}^M\) where \(S_i\subseteq S\) are random subsets of the original training dataset.

- Estimate the influence of each example \(x\in S\) as follows:

\[ {\mathcal{I}(x_j)=\frac{1}{N_j}\sum_{S_i:x_j\in S_i} f(S_i)} - {\frac{1}{M-N_j}\sum_{S_i:x_j\notin S_i} f(S_i)} \]

where \(M\) is the number of total subsets used to estimate influences, \(N_j\) is the total number of subsets containing example \(x_j\), and \(f(S_i)\) is the performance metric when evaluated on the validation set.\(^1\)

In other words, a higher score for \(\mathcal{I}(x_j)\) corresponds to a higher average improvement in validation performance when \(x_j\) was included in the prompt. This is analogous to the meaning of influences in the classic setting, but adapted to the ICL setting: instead of training models on a dataset, we are prompting models on examples.

Distribution of in-context influences

In the following figure, we visualize the distribution of computed influences of training examples on ICL performance.

The two tails of the influence distribution identify highly impactful in-context examples. Examples with large positive influence tend to help ICL performance, whereas examples with large negative influence tend to hurt ICL performance. This observation suggests a natural approach for creating prompts for ICL: we can use examples in the right tail to create the “best” prompt, or use examples from the left tail to create the “worst” performing prompt.

\(^1\)This method of estimating influences with random subsets has similarities to the framework of datamodels, which uses random subsets to train a linear model that predicts performance. In our paper, we also consider a similar analog of the datamodels approach for estimating in-context influences.

Influence-based example selection

Once we have computed in-context influences, we can use these influences to select examples for ICL. Intuitively, examples with more positive influences should lead to better ICL performance. As a sanity check, is this indeed the case?

In the following figure, we partition the training data into 10 percentile bins according to their influence scores, and measure the validation performance of prompts using examples from each bin.

We find a steady and consistent trend: examples with higher influences do in fact result in higher test performance in most models and tasks! Interestingly, we find a significant difference between examples with positive and negative influences: a 22.2% difference on the task WSC and 21.5% difference on the task WIC when top-bin examples are used instead of bottom-bin examples on OPT-30B. This provides one explanation for why the choice of examples can drastically affect ICL performance: according to our influence calculations, there exists a small set of training examples (the top and bottom influential examples) that have a disproportionate impact on ICL performance.

Examples with Positive/Negative Influence

Classic influences have found qualitatively that positively influencial examples tend to be copies of examples from the validation set, while negatively influential examples tend to be mislabeled or noisy examples. Do influences for ICL also show similar trends?

We find that in some cases, these trends also carry over to the ICL setting. For example, here is a negatively influential examples in the PIQA task:

Goal: flashlight

Answer: shines a light

In this case, the example is quite unnatural for the task: rather than flashlight being a goal for shining a light, it would be more natural to have shining a light be a goal for the flashlight. This is an example of how the design of the template can result in poor results for certain input-output pairs. This is especially true when the template is not universally suitable for all examples in the training data.

However, in general we found that differences between examples with positive or negative influences was not always immediately obvious (more examples are in our paper). Although we can separate examples into bins corresponding to positive and negative influence, identifying the underlying factors that resulted in better or worse ICL performance remains an open problem!

Conclusion

In this blog post, we propose a simple influence-based example selection method that can robustly identify low- and high- performing examples. Our framework can quantify the marginal contribution of an example as well as different phenomena associated with ICL, such as the positional biases of examples.

For more details and additional experiments (ablation studies, case studies on recency bias, and comparisons to baselines) please check out our paper and code.

Concurrent to our work, Chang and Jia (2023) also employ influences to study in-context learning. They show the efficacy of influence-based selection on many non-SuperGLUE tasks. You can check out their work here.

Citation

@article{nguyen2023incontextinfluences,

author = {Nguyen, Tai and Wong, Eric},

title = {In-context Example Selection with Influences}, journal = {arXiv},

year = {2023},

}